Ramdas Honored for Efforts To Improve Research Reproducibility

Stacy KishMonday, January 27, 2020Print this page.

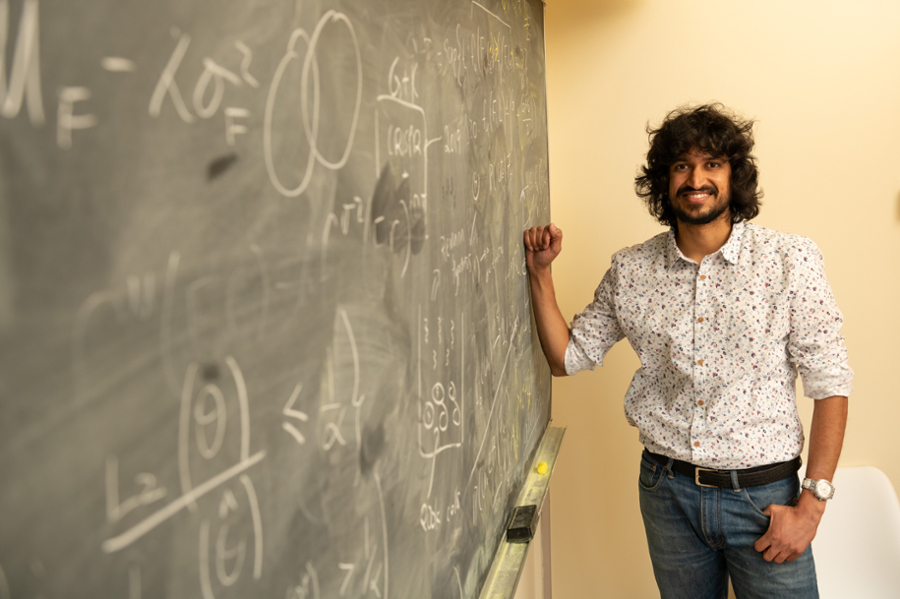

Carnegie Mellon University's Aaditya Ramdas, assistant professor in the Department of Statistics & Data Science and Machine Learning Department, has received the National Science Foundation's (NSF) Faculty Early Career Development Award for his project, titled "Online Multiple Hypothesis Testing: A Comprehensive Treatment."

"Arguably, one of the major hurdles to reproducibility of scientific studies is the cherry picking of results among the vast array of tests run or quantities estimated," Ramdas said. "We need 'online' methods to correct for cherry picking, first acknowledging that the problem exists and then designing algorithms that can account and correct for it."

According to Ramdas, statistical methods that improve reproducibility in large-scale scientific studies will combat the increasing public distrust in science. The results of this five-year grant could transform how technological and pharmaceutical industries as well as the sciences perform large-scale hypothesis testing. In addition, it allows Ramdas to fund graduate and postgraduate students to prepare the next generation of researchers.

This award supports junior faculty who exemplify the role of teacher-scholar through outstanding research, excellence in education, and the integration of education and research within the context of the mission of their organization. These activities build a firm foundation for a lifetime of leadership in integrating education and research.

"Aaditya's work promises a unification of the central ideas underlying a fundamental statistical problem and a further advance in our understanding of the problem itself," said Christopher Genovese, head of the Department of Statistics & Data Science. "This project intersects with deep theoretical questions, raises a host of methodological challenges and will have a significant impact for practitioners across many fields."

Hundreds or thousands of hypothesis tests, which, for example, can be used to evaluate the beneficial effects of different drugs, are often performed over the periods of months or years. It is natural that over time, this form of statistical testing may (simply due to chance) report some false results. Researchers often do not correct for cherry picking the 'best' results, and current statistical techniques are sometimes inadequate to correct for these results. According to Ramdas, these false discoveries not only lead to false hopes for potential treatments but also could waste millions of research dollars in needless follow-up studies.

"What I'm really interested in is a comprehensive approach that can unify how we handle different error metrics," Ramdas said. "Long-term grants like this one open the door to the kind of research that is more exciting, in that we address open-ended questions where there seems to be light at the end of the tunnel, but the path to get there is still unclear."

In this study, Ramdas will address this 'hidden' multiplicity to correct for selection bias that will improve long-term reproducibility. He hopes to develop statistical methods that will protect against the false discoveries using minimal assumptions. Ramdas aims to deliver an open-source software package to enable easier assimilation and application of these methods by other researchers.

Ramdas received a joint Ph.D. in Statistics and Machine Learning from CMU in 2015. He continued his postdoctoral studies at the University of California Berkeley before returning to CMU in 2018 as an assistant professor, teaching courses in statistical methods for machine learning, reproducibility and sequential analysis.

Byron Spice | 412-268-9068 | bspice@cs.cmu.edu<br>Virginia Alvino Young | 412-268-8356 | vay@cmu.edu